Generate AI Video and Images — Try It Now

Kling, Veo, Seedream, GPT Image, and more — all accessible from the homepage. Start with a text prompt or upload a reference image and get your output in minutes.

This image will be the starting frame of your video

0 / 5000

AI Video and Image Creations

Browse outputs from Kling, Veo, Seedream, GPT Image, and more — cinematic clips, animated stills, and high-resolution images. Explore what's possible before you generate.

What Is Happy Oyster AI?

Happy Oyster AI is an open-ended world model for real-time world creation and interaction. Built on a natively multimodal architecture supporting text, voice, and image inputs with joint audio-video generation, it produces continuous, physics-consistent environments where lighting, gravity, object positions, and character motion stay coherent across the entire session. Unlike standard AI video tools that follow a one-shot workflow — one prompt, one finished clip — Happy Oyster keeps listening and responding throughout generation. The world evolves in real time as you direct it. Widely referenced as happyoyster across developer and creator communities, the model represents a fundamental leap from passive video generation to active world simulation.

Happy Oyster operates in two modes. Directing Mode functions as a real-time production studio: users steer scenes for up to three minutes at 480p or 720p by issuing text, voice, or image instructions at any point during generation — switching camera angles, redirecting characters, or rewriting the narrative without restarting. The world responds instantly and continues from exactly where it was. Wandering Mode places users inside a generated environment in first-person view, navigable with WASD and camera controls for up to one minute at 480p. The world expands procedurally as users move, maintaining consistent object placement, spatial physics, and environmental continuity throughout the session.

The team behind Happy Oyster previously released HappyHorse, which topped global text-to-video benchmarks before Happy Oyster was unveiled on April 16, 2026 — marking a fundamental shift from clip generation toward live, interactive world simulation. Target applications include real-time film pre-production, game world prototyping, and interactive storytelling where viewer choices shape the outcome. This platform is built in the spirit of that vision — offering video, image, and audio generation tools powered by Kling, Veo, Seedream, GPT Image, and more while the Happy Oyster world model is on the way.

AI Engines on This Platform

Video, image, and audio models — each optimized for a specific creative task, all accessible from one account.

Happy Oyster AI

VideoHappy Oyster AI is an open-ended world model generating continuous, interactive 3D environments in real time. Directing Mode steers scenes up to three minutes through multimodal input. Wandering Mode delivers first-person exploration through procedurally expanding worlds. Physics-consistent lighting, motion, and spatial continuity across the full session. The world model is on the way.

Kling

VideoKuaishou's video engine built on 3D VAE spatial modeling. Co-generates video and audio in a single pipeline — synchronized dialogue, sound effects, and background music produced alongside the visual output. Supports text-to-video, image-to-video, multi-shot sequencing up to 15 seconds, Motion Control for character animation, and AI Avatar for lip-sync video.

Veo

VideoGoogle DeepMind's cinema-grade video generator producing 8-second clips at broadcast-quality resolution. Built-in AI audio generates synchronized sound without post-production. Leads in cinematic scene composition and environmental realism. Supports first-and-last-frame control and reference-style video generation.

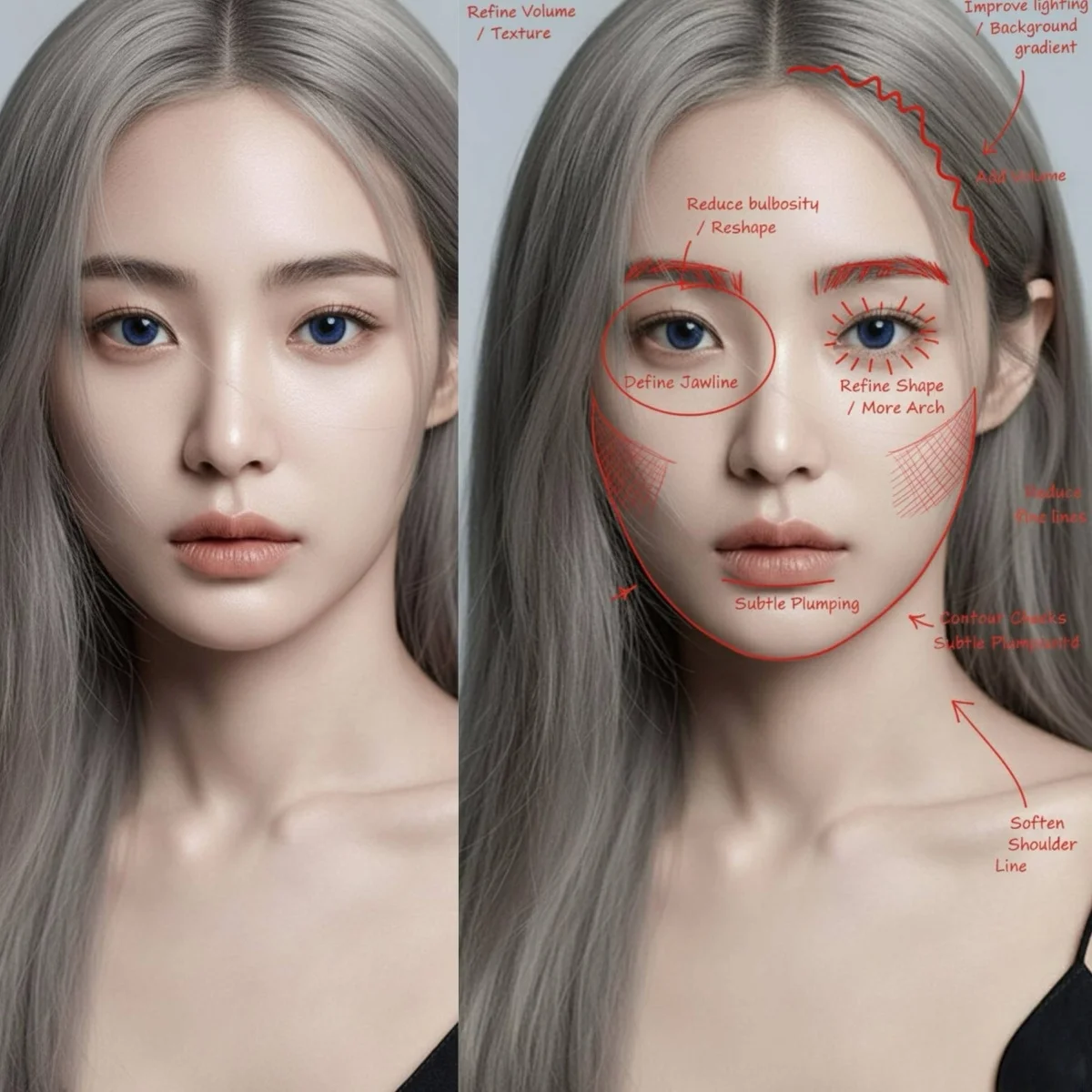

GPT Image

ImageOpenAI's image model ranked #1 on LMArena, Design Arena, and Artificial Analysis Image Arena — three independent benchmarks specifically scoring text rendering accuracy inside generated images. The direct choice for any prompt where legibility, typography, or branded graphic accuracy is non-negotiable.

Flux Pro

ImageBlack Forest Labs' production-grade image engine with benchmark-leading win rate on head-to-head comparisons. Generates at 1K and 2K across seven aspect ratios. Built for throughput — product batches, social content, and rapid iteration where generation speed is the constraint.

Nano Banana

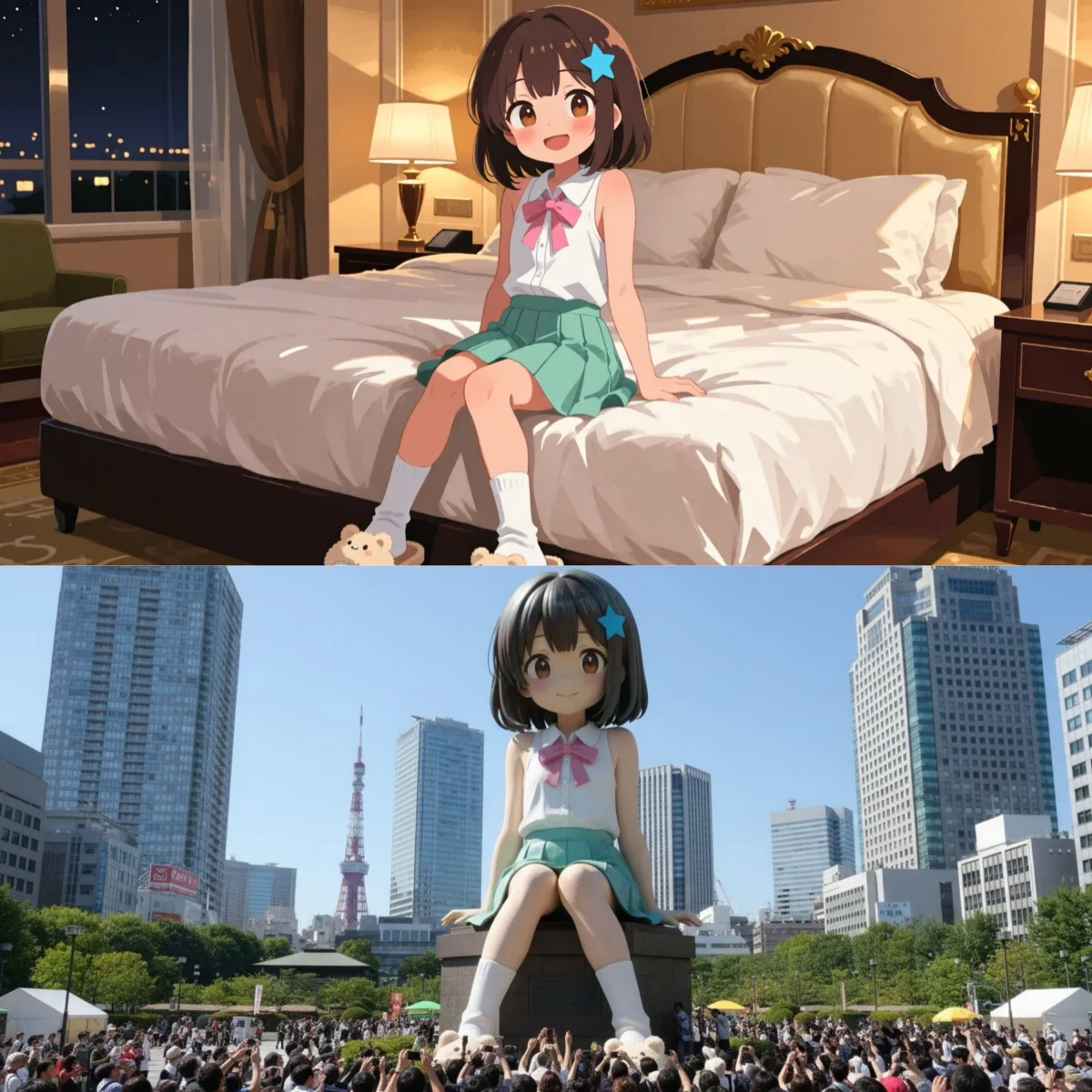

ImageGoogle's character-consistency image engine. Accepts up to 8 reference images in text-to-image mode to anchor face, hairstyle, clothing, and brand mark across every generation in a series. Nano Banana 2 adds Google Search grounding for real-world subject accuracy, 14 reference image support, and 15 aspect ratios.

Seedream

ImageByteDance's native 4K image engine outputting up to 4096×4096 px across eight aspect ratios including 21:9 ultrawide. Seedream 5 Lite applies Chain-of-Thought visual reasoning for scenes with complex spatial relationships, multiple figures, or precise compositional requirements.

Runway Gen-4

VideoRunway's Gen-4 Aleph for AI video editing. Transform existing video footage with text prompts — style transfer, object modification, and scene changes while preserving the original motion path. Multiple aspect ratios with professional-grade output quality.

AI Creative Tools for Every Format

Video, image, motion, and audio — multiple AI engines in one platform, optimized for different creative tasks and output requirements.

AI Video Generator

Happy Oyster AI generates video and sound in a single pass — no separate audio step, no sync issues. Kling 3.0 delivers native 4K with bilingual audio. Veo 3.1 renders 48 kHz spatial stereo for cinematic-grade productions. Start free.

Create VideoAI Image Generator

GPT Image 2 and GPT Image 1.5 for text-accurate graphics, Seedream 4.5 for native 4K scenes, Flux 2 Pro for rapid batch work, Nano Banana Pro for consistent characters. One workspace, every creative task — free to start, no watermark.

Create ImageWhy Build with Happy Oyster AI

The platform built around the world model vision — with AI video, image, and audio tools available to creators right now.

Built Around the World Model Vision

Happy Oyster AI defines a new standard for AI-generated content — persistent, responsive environments rather than single clips. The tools on this platform are built in that spirit: physics-consistent video engines, character animation systems, and multimodal workflows designed for creators who think in scenes and worlds, not just frames.

Spatial Consistency Across Every Frame

Kling's 3D VAE architecture models object depth, surface physics, and lighting frame by frame — not as post-processing, but embedded in the generation pipeline. Objects stay grounded, lighting shifts naturally, and motion follows physical rules. It is the closest commercially available video engine to the world model standard for spatial coherence.

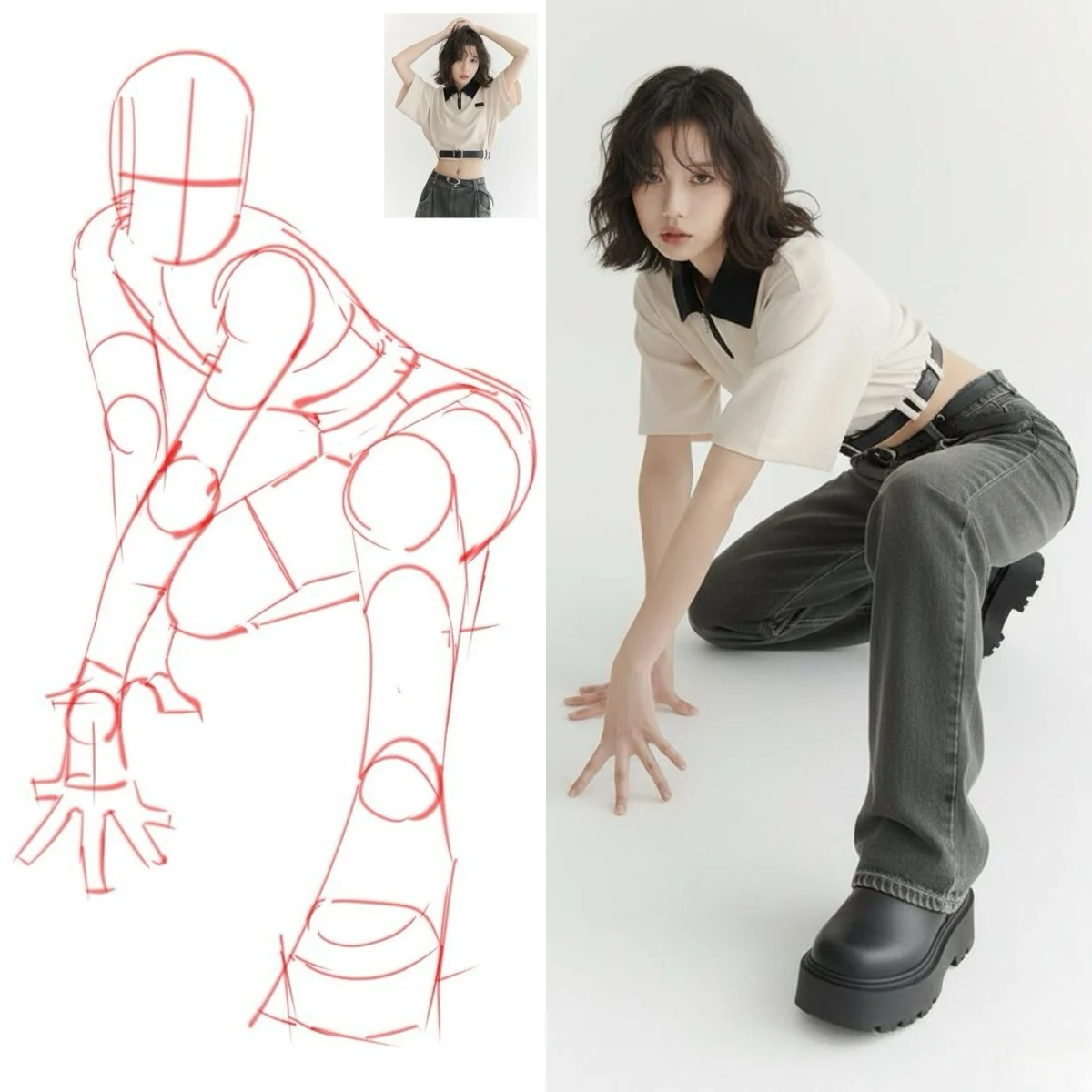

Character Control at Frame-Level Precision

Motion Control transfers reference video movement onto character images with full-body synchronization — capturing joint positions, weight transfer, and micro-gestures down to individual fingers. Dance choreography, sign language, martial arts, and performance sequences, generated from a reference clip and a single character image with no tracking equipment.

Every Leading Engine, One Account

Veo 3.1 for cinema-grade output with integrated AI audio. Kling 3.0 for native audio co-generation and motion control. Wan for HD image-to-video. Runway Gen-4 Aleph for AI video editing. GPT Image for text-accurate graphics. Seedream 4.5 for native 4K. Flux 2 Pro for batch generation speed. Run them on the same prompt and ship the output that performs.

Generate Now, No Equipment Required

No GPU, no software installation, no motion capture hardware, no render farm. Open the platform, write a prompt or upload a reference file, and generate. Watermark-free output on paid plans — commercially licensed for social media, advertising, product content, film pre-production, and client deliverables.

How to Create AI Video and Images

Three steps from prompt to finished output — no technical knowledge or hardware required.

Write your prompt or upload a reference

Describe the scene you want to create in plain language — setting, subject, mood, and motion. For image-to-video or motion control, upload a still image or reference clip. The same interface handles text-to-video, image-to-video, text-to-image, and image-to-image workflows.

Select the AI engine for your task

Choose from Kling, Veo, Wan, Runway, GPT Image, Seedream, Flux, and more. Each model is optimized for a specific output type — native audio co-generation, 4K resolution, cinematic quality, batch speed, or character consistency. Pick the engine that matches your brief.

Download and use commercially

Generation takes seconds to a few minutes depending on model and resolution. Output arrives watermark-free on paid plans with full commercial licensing — ready for social media, advertising, film pre-production, product content, and client deliverables.

Happy Oyster AI — Frequently Asked Questions

Everything about the world model, platform tools, and how to get started.

The World Is Your Oyster — Start Creating

Generate AI video, images, and audio from any prompt. Kling, Veo, Seedream, GPT Image, Flux, and more — all in one platform. The Happy Oyster world model is on the way.